Google Research’s TurboQuant is a credible, mathematically grounded approach to aggressive vector quantization that specifically targets memory-heavy parts of modern AI systems—most notably the KV cache in long-context LLM inference and high‑dimensional vector search. In Google’s reported tests, TurboQuant preserves benchmark outcomes while reducing KV-cache memory “by a factor of at least 6×,” and it can materially speed up a key attention computation on modern GPUs. However, the near-term impact on commodity DRAM (server RDIMMs; consumer DDR5) is likely to be indirect and expectation-driven rather than a sudden physical drop in DRAM demand: TurboQuant’s immediate savings mostly land in accelerator working memory (HBM/GPU memory), and broad hyperscaler deployment typically takes months of integration and validation. The key question for DRAM and RAM prices is therefore not “does TurboQuant work?” but “how fast does it propagate into production, and does efficiency reduce total memory consumption or enable more inference?”

What TurboQuant does technically

TurboQuant is introduced as an online, data-oblivious vector quantization method designed to preserve both mean-squared error (MSE) and inner-product geometry, which matters for attention (Q·K) and similarity search. The core idea, per the paper, is a two-stage design:

- Stage one: apply a random rotation to make coordinates more “well-behaved” statistically, then apply near‑optimal scalar quantizers per coordinate.

- Stage two: because MSE-optimal quantization can bias inner products, TurboQuant applies a 1‑bit Quantized Johnson–Lindenstrauss (QJL) transform on the residual to achieve unbiased inner-product estimation, with theory connecting distortion to information-theoretic lower bounds.

Two concrete “headline knobs” appear across the primary materials:

- Memory reduction: Google reports KV-cache memory reduced by ≥6× in long-context testing.

- Quality/compression tradeoff: the arXiv abstract reports “absolute quality neutrality” around 3.5 bits per channel and only “marginal” degradation around 2.5 bits per channel (midpoint assumption for planning: 3.0 bits/channel).

Important scope note: TurboQuant is framed primarily as compressing working/state memory (KV cache, vectors), not shrinking model weights in the usual sense—so it changes serving economics more than it changes “how many parameters fit.”

Evidence of performance and real-world maturity signals

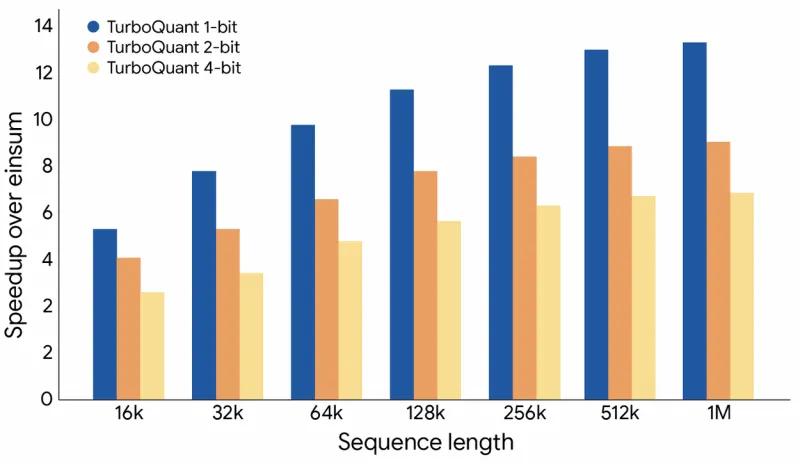

Primary benchmark claims. Google’s blog states that TurboQuant preserves downstream outcomes across cited long-context benchmarks while cutting KV-cache memory usage by at least 6×, and it mentions up to an 8× speedup for a key attention computation on H100-class GPUs (a kernel-level claim that may or may not translate linearly to end-to-end throughput). Ars Technica’s coverage echoes the same reported magnitude—6× memory reduction and 8× performance increase in some tests—helpful as an independent readout of Google’s messaging (but still ultimately rooted in Google’s results).

Source: Google research blog

Source: Google research blog

Paper/preprint detail. TurboQuant as near-optimal (within a small constant factor) relative to information-theoretic limits and claims strong results in nearest-neighbor search while reducing indexing time toward zero. The OpenReview PDF (ICLR submission) includes evidence that TurboQuant can match full-precision outcomes at 4× KV compression (i.e., 25% KV cache size) in evaluated settings—useful grounding that “6×” is not the only operating point.

Code and ecosystem signals. A public GitHub repository implements TurboQuant KV-cache compression with vLLM integration and reports benchmarking across GPUs, which is a meaningful (if unofficial) signal that the method is implementable in mainstream inference stacks. In parallel, community issue threads requesting TurboQuant support in popular inference projects (e.g., vLLM) indicate early interest, but they are not evidence of production deployment at scale.

Adoption timing and deployment risks

TurboQuant’s near-term economic impact depends on deployment, and deployment depends on engineering reality:

- Integration cost: new quantization schemes must be wired into kernels, memory layouts, and scheduling, then validated across model families and context regimes. TechCrunch explicitly notes the method has not yet been broadly deployed, framing it as a research breakthrough rather than “now everywhere.”

- Risk tolerance: even “marginal” quality changes can be unacceptable for certain enterprise or safety-critical use cases; production rollouts often start with particular traffic slices or internal workloads.

- Workload fit: TurboQuant’s biggest wins are most plausible where KV cache is the bottleneck (long contexts, memory-bound inference). The Register argues it’s a “big deal” but not a magic end to memory constraints, emphasizing scope limits and second-order effects.

Assumption (explicit): deployment lag 3–18 months, midpoint 10.5 months, from announcement to meaningful hyperscaler penetration (varies by stack, procurement, and validation demands). This is a planning assumption, not something the sources quantify.

Implications for DRAM demand and RAM prices

TurboQuant changes the story in two different timelines.

Short-term (April–summer 2026): the expectations channel dominates. The market reaction shows this clearly: Bloomberg reporting described a selloff in memory-related stocks after TurboQuant publicity, reflecting investor concern that “AI will need endless memory” might be less certain. Reuters likewise flagged TurboQuant as a technology factor affecting views on future memory demand, even as it reported strong AI-driven pricing for memory. None of this implies immediate DRAM glut; it implies re-priced expectations and potentially more cautious incremental procurement at the margin.

Medium term (late 2026–2027): structural effects are plausible but ambiguous. TurboQuant could reduce the amount of working memory (often HBM) needed per inference stream, and it could compress vector indexes that are sometimes kept in DRAM for latency reasons. But efficiency can also enable more inference volume, longer contexts, and new product categories—offsetting savings.

Explicit assumptions used for scenarios

- KV-cache compression factor (effective): 4× (conservative), 6× (base; anchored to “≥6×”), 8× (aggressive). Midpoint of 4–8 is 6×.

- Bits/channel operating point: 2.5–3.5, midpoint 3.0 (planning), with lower bits risking more quality loss.

- Addressable DRAM share inside AI inference servers: 10–30%, midpoint 20% (represents KV-cache offload into host memory + in-DRAM vector indexes in some deployments; highly deployment-dependent). Assumption.

- Adoption penetration at 12–18 months: 20% / 40% / 60% for conservative/base/aggressive. Assumption.

- Training impact: limited (TurboQuant targets inference KV cache and vector search more directly).

Scenario table: estimated DRAM demand reduction within AI workloads

These are within inference/training DRAM footprints, not global DRAM demand.

| Scenario | Effective memory saving (addressable part) | Adoption (12–18 months) | Est. DRAM reduction in inference workloads | Est. DRAM reduction in training workloads |

|---|---|---|---|---|

| Conservative | 4× | 20% | ~1–3% | ~0–0.5% |

| Base | 6× | 40% | ~4–7% | ~0.5–1% |

| Aggressive | 8× | 60% | ~10–15% | ~1–2% |

Interpretation: these ranges assume a midpoint addressable DRAM share of ~20% and do not include any rebound (usage growth) effect. If the rebound is strong, the net reduction could be smaller or even negative.

Retail/module vs contract: Does TurboQuant explain the April 2026 retail RAM drops?

TurboQuant is not a convincing mechanical explanation for April’s consumer DDR5 price corrections. Retail RAM prices are driven by consumer demand, distributor inventory, and module/channel dynamics; TurboQuant primarily targets AI inference memory economics and is not yet widely deployed.

The more defensible conclusion is: TurboQuant is mainly an expectations channel in April 2026—powerful enough to move stocks and narratives, and perhaps to influence procurement sentiment—but it likely did not cause consumer DDR5 retail pullbacks directly. TurboQuant is referenced as an evolving-tech risk to future demand even amid strong AI-driven price pressure.